Published Work — Papers, Playbooks, Code

BOOKS.

The Enterprise Agentic Platform

Architecture, Patterns, and the AI Operating System. A Technical Reference for Building Governed, Scalable Agentic Systems in the Enterprise.

Every company will build an agentic platform. Gartner predicts 40% will be cancelled by 2027, not because the technology fails, but because the governance layer was never built. This is the blueprint for the other 60%. Twelve chapters on the full ecosystem (protocols, frameworks, LLMs, guardrails, observability), the six implementation paths with honest trade-offs (Microsoft, Salesforce, AWS, Google Cloud, Databricks, DIY), the four-layer AI Operating System architecture, and a week-by-week 90-day plan.

Download the playbook →

From Autonomous Agents To Accountable Systems

The Enterprise Playbook for High-Trust, High-ROI AI

The "AI agent" hype is a strategic trap. Enterprises don't need more autonomy. They need accountability. This playbook argues that the durable asset isn't the agent, it's the Enterprise AI Operating System: the architectural blueprint that turns ungoverned experiments into a governed, auditable, reusable capability. Includes a 3-step, 90-day roadmap for leaders who want to stop chasing features and start owning the platform.

Download the playbook →THE AI OS SUITE.

Six open-source libraries. The AI agents toolkit for governance, observability, auth, and reliability. These are the pieces you need.

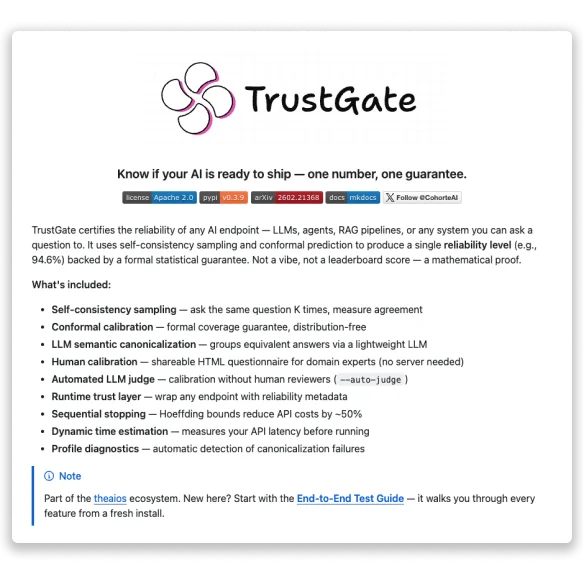

TrustGate

Black-box reliability certification for AI agents via self-consistency sampling and conformal calibration. The production implementation of the TMLR paper. Exact, finite-sample, distribution-free guarantees. No retraining, no white-box access.

View on GitHub →

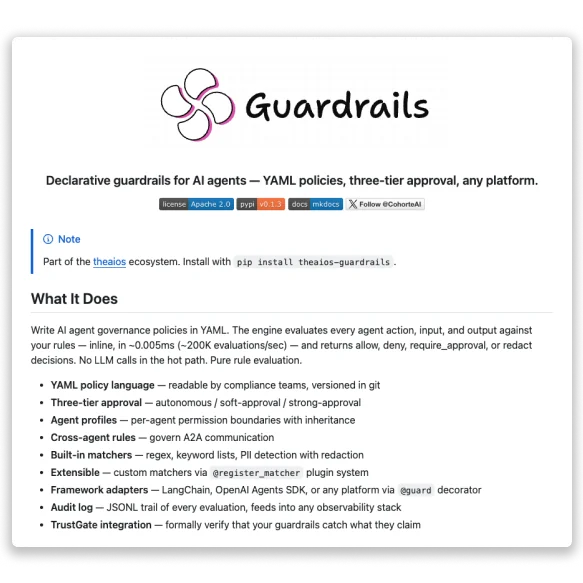

Guardrails

Declarative YAML-based policy engine for AI agent guardrails. Define what an agent can and can't do in a config file, not in prompt engineering. Auditable, reviewable, diff-able.

View on GitHub →

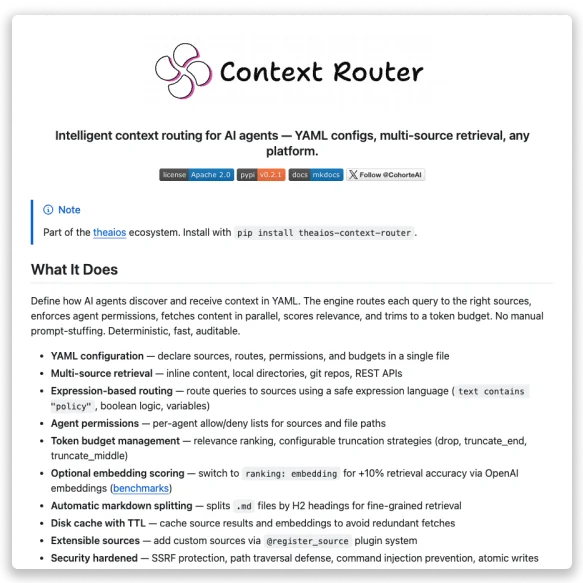

Context-Router

Intelligent context routing for agentic systems. Decides what context to load, for which step, at what cost. Instead of jamming everything into every call. Built for systems where token economics matter.

View on GitHub →

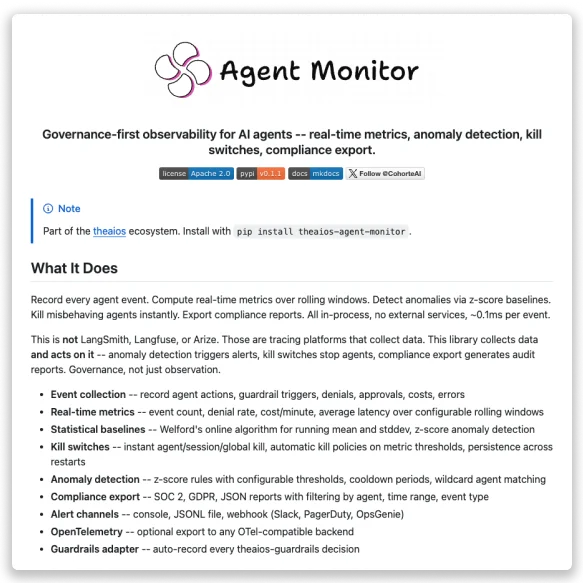

Agent-Monitor

Governance-first observability for AI agents. Built around the questions auditors actually ask, not the metrics dashboards love. Traces, decisions, interventions. Logged in a form a compliance team can read.

View on GitHub →

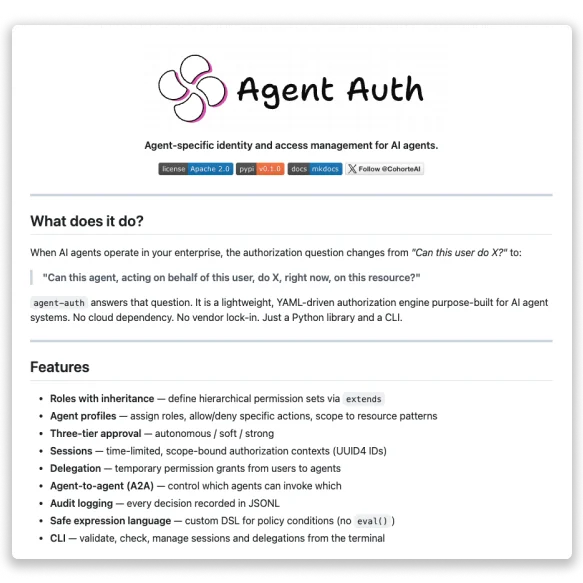

Agent-Auth

Identity and access management for AI agents. Agents aren't users. Your security model was built for users. This is the missing layer.

View on GitHub →

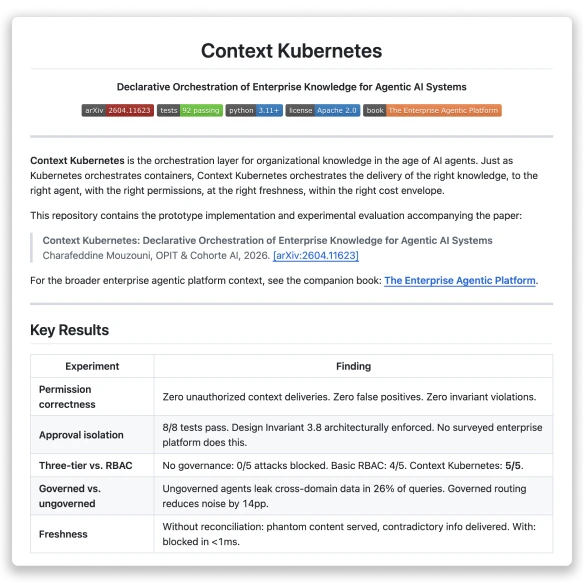

Context Kubernetes

Declarative orchestration of enterprise knowledge for agentic AI systems. Delivers the right knowledge, to the right agent, with the right permissions, at the right freshness. The reference implementation of the Context Kubernetes paper.

View on GitHub →RESEARCH.

Context Kubernetes: Declarative Orchestration of Enterprise Knowledge for Agentic AI Systems

The hardest problem in enterprise AI isn't building agents. It's deciding what they're allowed to know, and when. This paper treats enterprise knowledge as an orchestration problem, not a retrieval problem. Agents never hold more authority than the human who deployed them. Flat permissions block zero of five attack scenarios. The full architecture blocks all five.

Read the paper →

Mapping The Exploitation Surface: A 10,000-trial taxonomy of what makes LLM agents exploit vulnerabilities.

Everyone knows AI agents can exploit vulnerabilities. Nobody knew which prompt features trigger it. We ran 10,000 trials. Nine of twelve attack dimensions produce zero exploitation. But goal reframing ("you are solving a puzzle") breaks every model tested, even with explicit safety rules. A testable threat model for anyone deploying agents in production.

Read the paper →

Three Phases of Expert Routing: How Load Balance Evolves During Mixture-of-Experts Training

How do the "expert" modules inside large AI models learn to share the work? We modeled it as a congestion game and found three distinct phases: surge, stabilization, relaxation. All three are invisible if you only study the finished model. Validated on real open-source checkpoints.

Read the paper →

Black-Box Reliability Certification for AI Agents via Self-Consistency Sampling and Conformal Calibration

How do you certify an AI agent's reliability without opening the black box? Self-consistency sampling plus conformal calibration gives you a single, provable reliability score. No retraining, no access to model internals. Cuts API cost by ~50% through sequential stopping. Validated across five benchmarks and three model families.

Read the paper →

A Mean Field Game of Portfolio Trading and Its Consequences On Perceived Correlations

With Charles-Albert Lehalle

When thousands of traders execute similar portfolio strategies simultaneously, their collective behavior distorts the very market signals they rely on. This paper proves it mathematically and validates it on 176 US stocks over one year. The correlations you see in your data aren't the real ones.

Read the paper →

On quasi-stationary Mean Field Games models

What happens when a population of decision-makers each optimize selfishly while treating the world as static? The system still reaches a unique equilibrium and converges exponentially fast. A foundational result for modeling large populations of interacting agents.

Read the paper →

On mean field games models for exhaustible commodities trade

With P. Jameson Graber

Oil runs out. Mines deplete. What happens to market competition when firms exit one by one as their resources vanish? A mathematical framework for firms producing exhaustible resources, with rigorous equilibrium results for the N-player game.

Read the paper →

Variational mean field games for market competition

With P. Jameson Graber

Two classical models of market competition, price-setting and quantity-setting, unified under one mathematical framework. The entire equilibrium problem reduces to a single convex optimization. Cleaner theory, faster computation.

Read the paper →

A short proof of the large time energy growth for the Boussinesq system

In the Boussinesq system, which models heat-driven fluid flows, kinetic energy grows without bound over time. The opposite of what happens in Navier-Stokes. A short proof of a counterintuitive result that surprised the field.

Read the paper →